These are notes I made while investigating RAG and completing the Dometrain course Let’s Build It: AI Chatbot with RAG in .NET Using Your Data by James Charlesworth. I highly completing the course and supporting Dometrain ❤️

Terms

RAG has associated terms that we need to understand before building retrieval systems

- RAG - Retrieval Augmented Generation

- Vector database - a database designed to store embeddings (numeric vectors) and retrieve the most similar items quickly

- Embeddings - a numerical representation of text where similar meaning ends up close together in vector space; this is what allows semantic search to find relevant content even when exact keywords do not match

- Retrieval techniques - similarity methods used to find the nearest vectors to a query embedding

- Cosine Similarity

- Euclidean Distance

- Dot Product

- Token - the chunks text is split into for model input and output; token count affects context limits and cost

- HYDE - Hypothetical Document Embeddings, where the model generates a hypothetical answer first and embeds that text to improve retrieval

- Tools - functions or APIs exposed to an AI agent so it can act beyond text generation

- LLM - Large language model

- Keyword Search - exact or partial term matching against indexed text, without understanding meaning

- Semantic Search - searches by meaning using embeddings, so related phrasing can match even when exact words differ

- Prompt Stuffing - putting source content directly into the prompt; fine for small datasets, but for larger corpora retrieval from a vector database scales better

- Dimensions - the number of values in each embedding vector (for example 1536); more dimensions can capture more nuance, but increase storage and compute costs

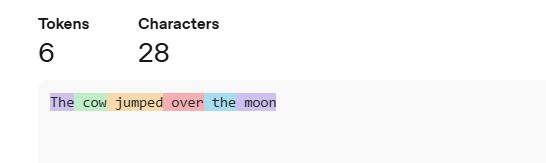

Tokens

Open AI has a free tokenizer tool avalible at https://platform.openai.com/tokenizer. I used their tool to create the example below.

“A helpful rule of thumb is that one token generally corresponds to ~4 characters of text for common English text. This translates to roughly ¾ of a word (so 100 tokens ~= 75 words)”

Models

These can come from any provider like OpenAI, Googles Gemini, Voyage AI ect

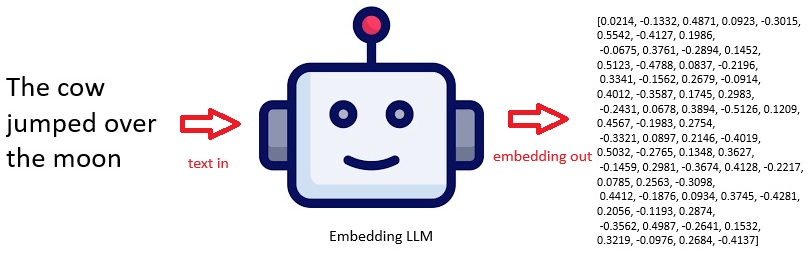

Embedding Models

Trained not to complete text but to look at the entire input text and work out what it means. The result is a vector representation of what it means. Similar neural network architecture to completion models, still use attention & transformer layers but are special models specifically for doing embeddings.

- The above is a representative embedding, not from a specific fixed model instance.

- Real embeddings (e.g. from OpenAI embeddings API) are typically hundreds to thousands of dimensions and deterministic per model.

- If you need exact reproducible vectors, you must specify the exact model (e.g. text-embedding-3-large) and compute it via API.

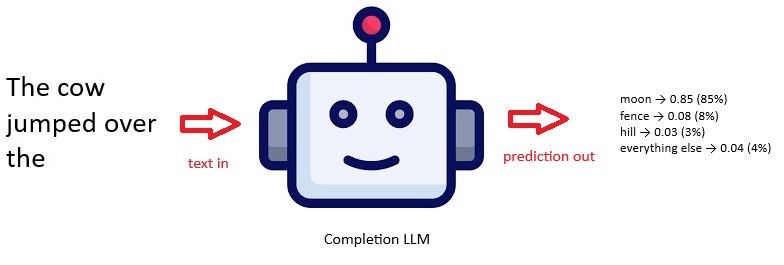

Completion Models

Embedding models are not the same as Completion models, most people know about ChatGPT which is popular to answer questions because it can identify patterns in text. These patterns can be used to predict the next word in a string.

So with completion a special type of neural network architecture in the transformer layer, in the LLM creates attention mechanisms. We give it text like “The cow jumped over the” and it works out the meaning of this text which it uses to predict the next word in a sentence is going to be. This has a set of probability distributions for what the next word is going to be. Each time a word is added to the sentence, the process repeats.

So for the completion example above:

- moon — very high probability (classic phrase)

- fence — moderate probability (common real-world continuation)

- hill — lower but plausible

Note that 0.85 is a probability, not a percentage. But you can convert it to a percentage: 0.85 = 85%. So building on the example: moon → 0.85 means the model assigns about an 85% chance that “moon” is the next token. These probabilities are relative to all possible next tokens, and they should sum to ~1 (or 100%) across the whole vocabulary.

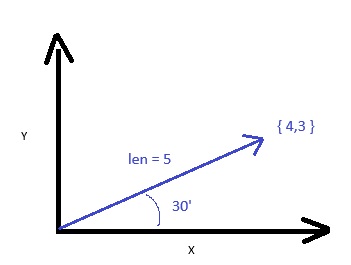

Vectors

In physics, a vector is defined as a quantity that has a magnitude and a direction.

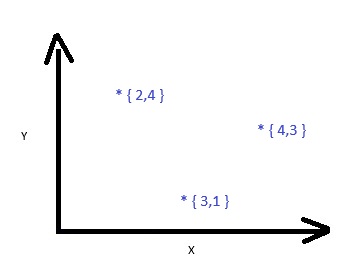

2D Example Vector

This 2D example shows the direction as the 30 degree angle with a magnitude (length) of 5. For this example {4,3} represents the meaning of some text. This can be understood as a co-ordinate of 4,3 so 4 on the X and 3 on the Y axis. This a point in 2 dimensional space.

You can then imagine several vectors in this 2D space

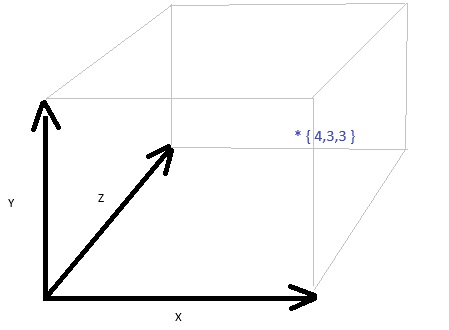

3D Example Vector

Vectors can be as many divisions as you like and in machine learning typically have 100s or 1000s of dimensions but anything more than 3D is hard to draw.

512 Dimensions

Example vector with 512 dimensions, these numbers have been normalized to be between -1 and +1. This is an array of 512 floating point numbers, my example below has been simplified to just have 24 for readability.

1 | [-0.03503418, -0.024047852, 0.05895996, 0.031433105, -0.039367676, 0.0027675629, 0.045806885, -0.02381897, 0.007911682, 0.033081055, -0.00030350685, 0.015609741, 0.0046920776, 0.044708252, 0.009407043, -0.03201294, -0.016052246, -0.045654297, 0.08605957, 0.05105591, 0.06854248, 0.030715942, -0.06970215, -0.010292053, ...] |

Vectors are useful because they allow you to do calculations when doing Machine learning and AI, Vectors can be fed into neural networks. The basic math could be finding out how similar 2 vectors are to each other, this is the technique that underpins semantic search.

References

- https://github.com/Dometrain/ai-chatbot-using-your-data-in-dotnet

- https://platform.openai.com/

- this is a plat form that gives API access to LLMs

- https://platform.openai.com/playground/images

- playground to create images from text

- https://platform.claude.com/docs/en/build-with-claude/embeddings

- https://app.flourish.studio/visualisation/28149706/edit

- help visualisation of data

- https://www.pinecone.io/

- Vector database as a service

- https://learn.microsoft.com/en-us/semantic-kernel/frameworks/agent/

- Agent Framework (old semantic kernal)